Resolution, Sampling rate, Response Time of Pressure Sensors

Resolution

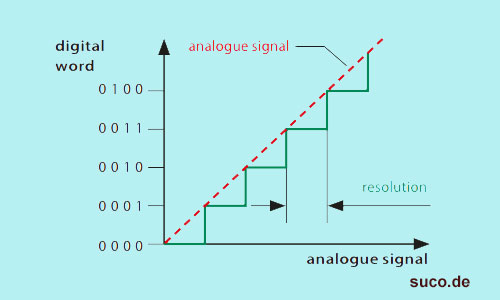

The analogue - digital resolution of a pressure transmitter can be expressed as the smallest change of the analogue – digital – analogue conversion that can be measured by the signal processing of a pressure transmitter.

This is an important factor for determining the precision of a pressure transmitter in detecting a pressure variation.

The resolution of a pressure transmitter is expressed in percentage of FSO (Full Scale Output).

For example, if there is a pressure transmitter with a 100 bar setting range and 13-bit resolution, the smallest amount of signal change is 8192 steps (213).

On most advanced transmitters, resolution of 12 bits and 4096 steps (212) is quite typical. So pressure changes of 100 bar / 4096 = 0.024 bar can be measured.

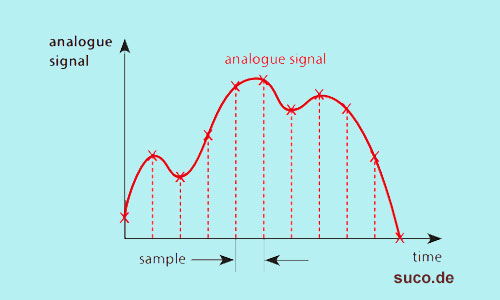

Sampling rate

The sampling rate or sampling frequency determines the number of samples per time (second or millisecond) obtained from a continuous analog signal to make a digital signal.

It shows how fast the output signal of a pressure sensor responds to the pressure change at the input.

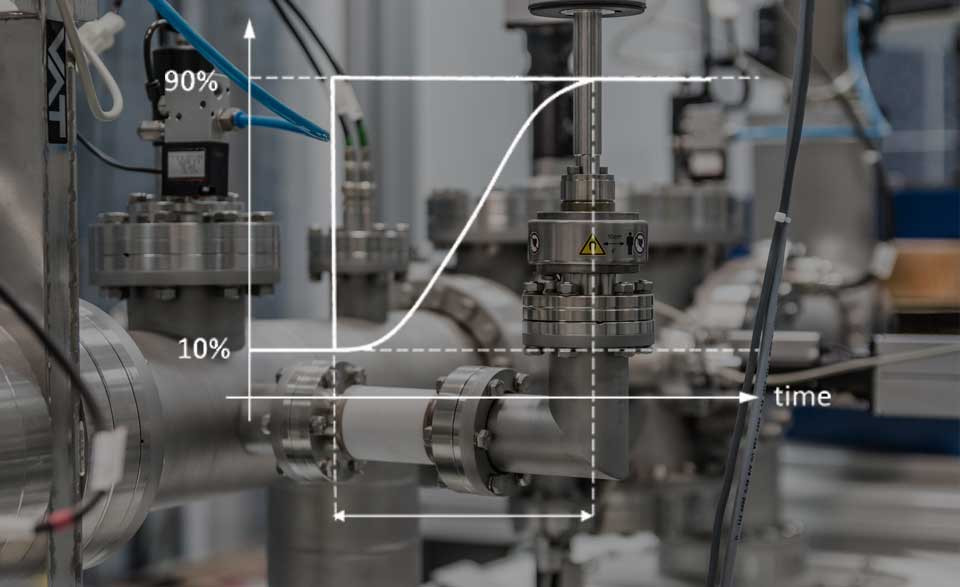

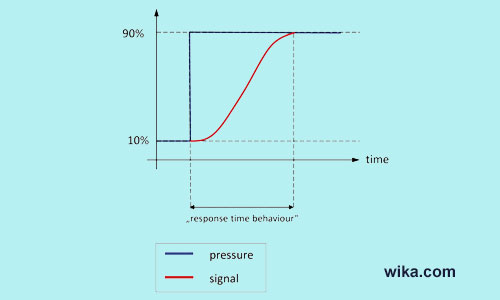

Response Time

This is the amount of time the sensor needs to apply the changes made in the input pressure to the output signal.

commonly referred to as:

- settling time

- rise time

- sensor time constant

In other words, it is the time or interval required for the sensor output signal to reach its final and stable value and change from 0 to 63.2% of the full scale when the pressure sensor is subject to an instantaneous full scale pressure change.

A total conversion of A/D, D/A and analog and digital filters in the signal chain from the measuring point to the output, form the response time.

On most standard and modern pressure sensors, this time is usually less than 2 to 4 milliseconds based on the model.

But in cases such as submersible pressure sensors, this time is often more than 100 ms.

Due to the particular constructive setups, dead time or overshoot are influencing factors for the response time of pressure sensors.

In mobile hydraulics where there are high load cycles, short response times are recommended, but in slow applications, like level measurements by submersible pressure sensors, long response times are usually useful.

The Following explanations are very similar to response time definition.

You may also want to browse our selection of pressure sensor with great prices on this link.

Dead Time

Dead time is the minimum amount of time required between two events for the sensor output to change and respond after applying a step change to the input and also can be defined as the time after each event during which the system can not measure another event.

Time Constant

It is defined as the required time for the sensor to respond to a rapid change in a measured quantity, and reach the output of 63.2% of its total step change in measurement until it is measuring values within the accuracy tolerance usually supposed for the sensor.

Also it is mechanical response time (MRT) + electronic response time (ERT).

You can also read the following article to get more familiar with pressure sensor:

Measuring Principle of Pressure Sensors

An Eye-Opening Guide To Pressure sensor Types: Everything You Need To Know

Types of Pressure (Absolute Pressure, Gauge Pressure, Differential Pressure)

Accuracy of Pressure Transmitters

Recent Posts

-

Booster Pump Troubleshooting and Maintenance: How to Fix and Prevent Common Issues

1. Introduction Imagine turning on your faucet only to be greeted with a weak trickle of water when …22nd Apr 2025 -

Energy-Efficient Booster Pumps: Selection and Tips for Maximizing Performance

1. Introduction Imagine never having to deal with fluctuating water pressure, noisy pumps, or skyroc …19th Apr 2025 -

Booster Pumps for Sustainable Water Systems: Irrigation and Rainwater Harvesting Solutions

1. Introduction Water scarcity is no longer a distant threat—it’s a reality affecting millions …16th Apr 2025